Infrastructure¶

All infrastructure is provisioned with Terraform from terraform/environments/production/, composed of seven reusable modules. The remote state lives in S3 (appointment-system-tfstate) with DynamoDB locking (terraform-locks). Terraform version: 1.9.8; AWS provider: ~> 5.0.

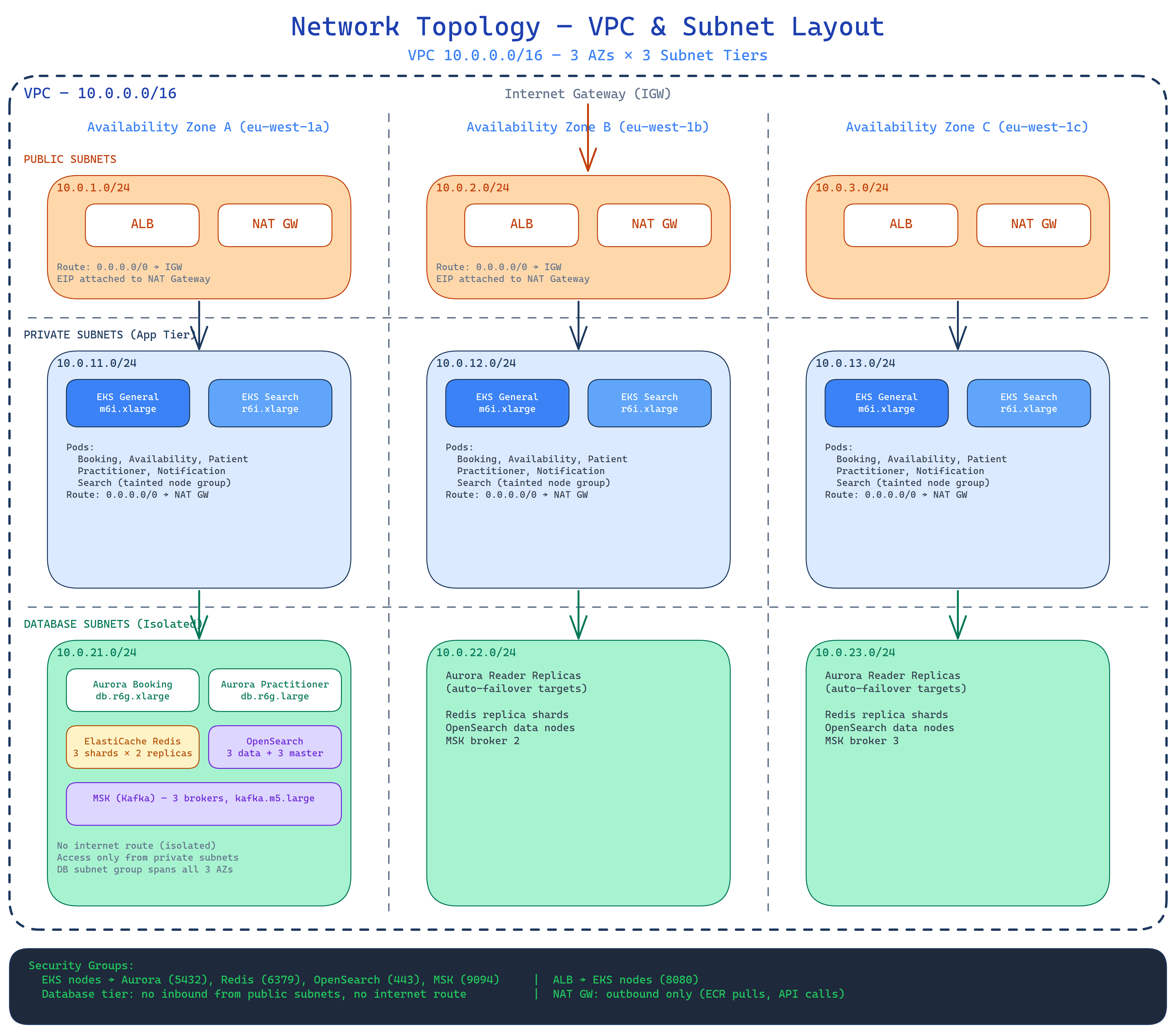

Network Topology¶

VPC Layout¶

- 3 Availability Zones, 9 subnets total (3 per AZ tier)

- CIDR blocks carved from the VPC CIDR using

/4increments, allocated by thenetworkingmodule viacidrsubnet - VPC endpoints for S3, ECR, and CloudWatch — traffic to these services stays on the AWS backbone

| Tier | Subnets | Contents |

|---|---|---|

| Public (3) | One per AZ | ALB, NAT Gateways (one per AZ for AZ-local egress), Internet Gateway |

| Private (3) | One per AZ | EKS worker nodes, ElastiCache, OpenSearch, MSK brokers |

| Database (3) | One per AZ | RDS Aurora clusters only; route through private NAT |

Compute — Amazon EKS¶

Terraform module: terraform/modules/eks/

All six services run as Kubernetes Deployments on EKS with managed node groups.

| Node Group | Instance | Taint | Workloads |

|---|---|---|---|

general |

m6i.xlarge |

None | Booking, Availability, Patient, Practitioner, Notification |

search |

r6i.xlarge |

workload=search:NoSchedule |

Search Service only |

The search node group uses memory-optimised instances because the Search Service holds large OpenSearch client caches. The NoSchedule taint prevents general workloads from landing there; the Search Service values-search.yaml adds the matching toleration and nodeSelector.

EKS control plane logging is enabled for all log types: api, audit, authenticator, controllerManager, scheduler.

An OIDC provider is provisioned alongside the cluster to support IRSA. Each service's ServiceAccount is annotated with its dedicated IAM role ARN, granting least-privilege AWS API access without node-level credentials.

Database — Amazon RDS Aurora PostgreSQL¶

Terraform module: terraform/modules/rds/

Three independent Aurora clusters, one per domain. The Availability Service shares the practitioner cluster for its schedule/exception data.

| Cluster | Writer | Reader Replicas | Tables |

|---|---|---|---|

booking |

db.r6g.xlarge |

1 | bookings |

practitioner |

db.r6g.large |

1 | practitioners, schedules, exceptions |

patient |

db.r6g.large |

1 | patients |

Common configuration across all clusters:

- Aurora PostgreSQL engine

- Storage encryption enabled

- Deletion protection enabled

- Automated backups — 7-day retention, daily backup window 03:00–04:00 UTC

- Master credentials managed by AWS Secrets Manager with automatic rotation

- Tables partitioned by

country_codefor multi-country isolation

Cache — Amazon ElastiCache (Redis)¶

Terraform module: terraform/modules/elasticache/

| Setting | Value |

|---|---|

| Mode | Cluster mode enabled |

| Shards | 3 |

| Replicas per shard | 2 |

| Instance type | cache.r6g.xlarge |

| Failover | Multi-AZ with automatic failover |

| Encryption | At rest and in transit |

| Snapshot retention | 3 days (daily window 04:00–05:00 UTC) |

Use cases:

- Availability bitmaps — 24-bit integer per practitioner per day (7M keys ≈ 2–4 GB)

- Search result caching — TTL 30–60 seconds

- Booking slot pre-checks — reduces RDS contention under load

Search — Amazon OpenSearch¶

Terraform module: terraform/modules/opensearch/

| Setting | Value |

|---|---|

| Data nodes | 3 × r6g.xlarge.search with 100 GB EBS each |

| Master nodes | Dedicated (managed by AWS) |

| Indices | Per-country (practitioners_fr, practitioners_de, …) |

| Access control | Fine-grained; EKS pods authenticate via SigV4 through IRSA |

| Ingestion | Kafka consumer (availability-events, practitioner-events) |

Replica shards on each index allow read scaling without a separate replica cluster. If a data node is lost, replica shards are promoted and the cluster self-heals.

Event Bus — Amazon MSK (Managed Kafka)¶

Terraform module: terraform/modules/msk/

| Setting | Value |

|---|---|

| Brokers | 3 × kafka.m5.xlarge across 3 AZs |

| Replication factor | 3 |

| Min in-sync replicas | 2 |

| Partitions per topic | 6 (default) |

| Partitioning key | practitioner_id |

| Retention | 7 days / 168 hours |

| Encryption | TLS client-to-broker and in-cluster |

| Auto topic creation | Disabled — topics provisioned explicitly via Terraform |

| Broker logs | Shipped to CloudWatch (/aws/msk/{project}, 14-day retention) |

Topics: booking-events, practitioner-events, availability-events

Notifications — SES + SNS¶

Terraform module: terraform/modules/notifications/

- Amazon SES — transactional email (confirmations, reminders, cancellations). Domain identity configured and verified via Terraform.

- Amazon SNS — SMS and mobile push notifications.

The Notification Service is the sole producer to SES and SNS. It has an IRSA role scoped to only the ses:SendEmail and sns:Publish actions.

Tagging Strategy¶

All resources receive the following default tags via the AWS provider default_tags block:

| Tag | Value |

|---|---|

Project |

appointment-system (from var.project) |

Environment |

production |

ManagedBy |

terraform |