Architecture Overview¶

System Architecture Diagram¶

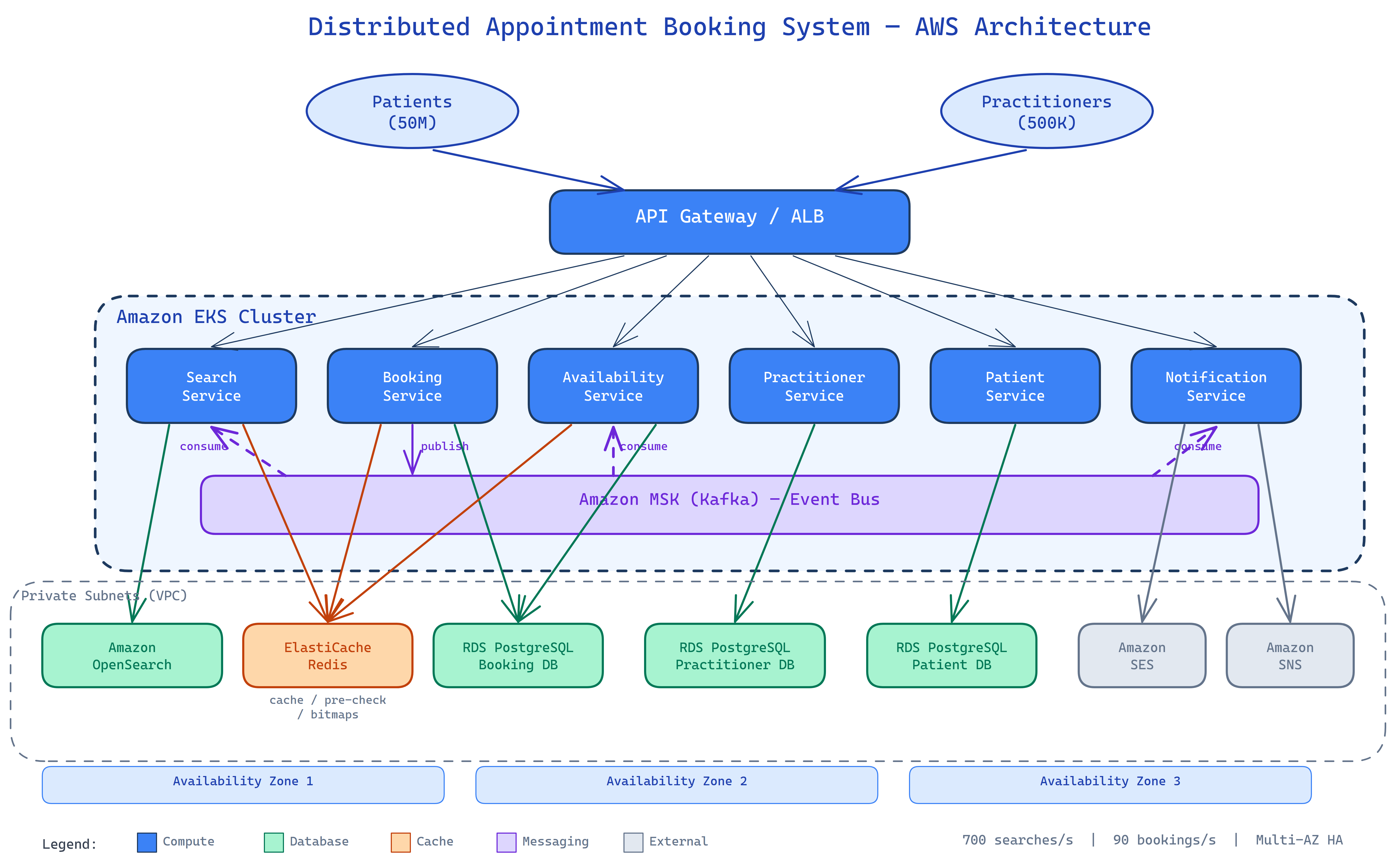

The diagram above shows the full component topology: the public-facing API Gateway, the six backend services running on EKS, the three data tiers (RDS, Redis, OpenSearch), the Kafka event bus, and the AWS-managed notification services.

Design Goals¶

| Goal | Target |

|---|---|

| Patient scale | 50 million registered patients |

| Practitioner scale | 500,000 practitioners |

| Search throughput | 700 requests/second |

| Booking throughput | 90 bookings/second |

| Booking correctness | Zero double-bookings — guaranteed by DB constraint |

| Availability | Multi-AZ across 3 AZs; no single point of failure |

| Expansion | New country live without code changes — config + index + partition |

Architectural Principles¶

Microservices with domain boundaries

Each service owns its data store. No service reads another service's database directly. Cross-service communication is either synchronous (via the ALB + internal DNS) or asynchronous (via Kafka events).

Event-driven read model updates

Writes go to the authoritative store (RDS). The search index (OpenSearch) and the availability cache (Redis) are derived read models, kept current by consuming Kafka events. If either is lost, it can be rebuilt by replaying the 7-day Kafka log.

Strong consistency where it matters

The booking write path uses a PostgreSQL unique partial index as the definitive conflict gate. Redis pre-checks reduce contention but are not the source of truth. See ADR-004.

Horizontal scaling by default

All services run behind a Kubernetes Horizontal Pod Autoscaler. The Search Service runs on its own memory-optimised node group (r6i.xlarge). Critical services (Booking, Availability, Patient) deploy via canary to limit blast radius.

Component Summary¶

| Layer | Component | Technology |

|---|---|---|

| Edge | API Gateway + ALB | AWS ALB + API Gateway |

| Compute | All 6 services | Amazon EKS (Kubernetes) |

| Booking store | Appointments | RDS Aurora PostgreSQL (db.r6g.xlarge writer) |

| Practitioner store | Profiles + schedules | RDS Aurora PostgreSQL (db.r6g.large writer) |

| Patient store | Profiles + history | RDS Aurora PostgreSQL (db.r6g.large writer) |

| Cache | Availability bitmaps, search cache | ElastiCache Redis (cluster mode, 3 shards) |

| Search index | Practitioner search | Amazon OpenSearch (3 × r6g.xlarge.search) |

| Event bus | Async propagation | Amazon MSK Kafka (3 × kafka.m5.xlarge) |

| Notifications | Email + SMS/push | Amazon SES + SNS |

| Secrets | DB credentials | AWS Secrets Manager (auto-rotation) |

Security Posture¶

- All data stores sit in private subnets — no public internet access.

- TLS in transit everywhere; AES-256 at rest on RDS, ElastiCache, OpenSearch, and MSK.

- Service pods authenticate to AWS APIs via IRSA (IAM Roles for Service Accounts) — no node-level credentials.

- Every container runs as UID 1000, with a read-only root filesystem and all Linux capabilities dropped.

- Every build is scanned by Snyk (dependencies) and Trivy (container images). CRITICAL/HIGH findings block the pipeline.

For full network topology, see Infrastructure.