CI/CD Pipelines¶

Pipeline Architecture¶

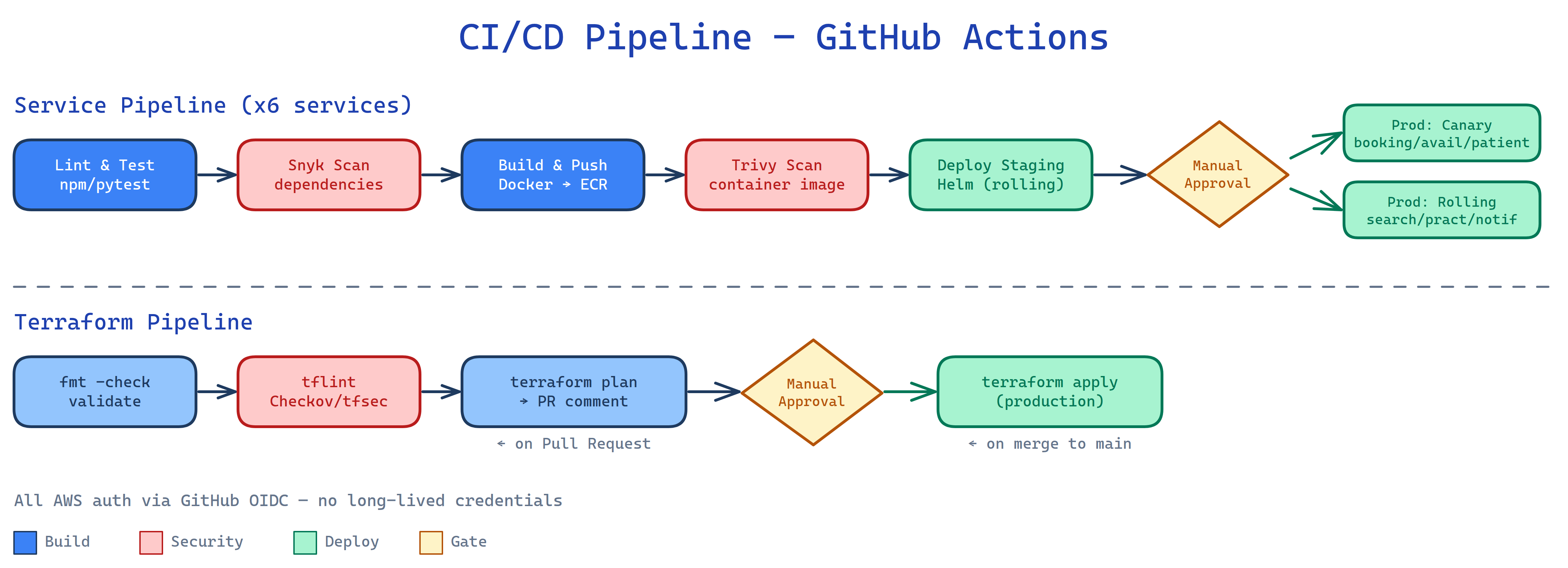

All six services share a single reusable GitHub Actions workflow (.github/workflows/service-ci-cd.yml). Each service has a thin caller workflow that supplies service-specific parameters. This keeps per-service files under 60 lines while all pipeline logic lives in one place.

.github/workflows/

├── service-ci-cd.yml # Reusable pipeline — all logic lives here

├── booking-service.yml # Caller: canary, node, services/booking

├── availability-service.yml # Caller: canary, node, services/availability

├── patient-service.yml # Caller: canary, node, services/patient

├── search-service.yml # Caller: rolling, node, services/search

├── notification-service.yml # Caller: rolling, node, services/notification

├── practitioner-service.yml # Caller: rolling, node, services/practitioner

└── terraform.yml # Separate pipeline for infrastructure changes

Service Pipeline¶

Stages¶

Stages 1–4 run on every push and pull request. Stages 5–6 only run on merges to main.

lint-and-test → dependency-scan → build-and-push → image-scan → deploy-staging → [approval] → deploy-production

Tool: npm / pytest / golangci-lint / Maven (selected by runtime input)

The reusable workflow supports four runtimes — Node.js, Python, Go, and Java — each with its own setup, dependency install, lint, and test steps. Unused runtimes are skipped via if: inputs.runtime == '...' conditions. Test coverage is uploaded as a 7-day artifact.

Tool: Snyk

Scans the service manifest (package.json, requirements.txt, go.mod) for known CVEs. Blocks the pipeline on HIGH or CRITICAL severity findings. Results are uploaded to the GitHub Advanced Security tab as SARIF.

Tool: Docker Buildx + Amazon ECR

Uses docker/build-push-action with ECR as the BuildKit layer cache backend. Images are tagged with the full SHA, short SHA, and latest. SBOM and provenance attestations are generated on every push.

- uses: docker/build-push-action@v6

with:

file: docker/Dockerfile

tags: |

${{ env.ECR_REGISTRY }}/service:${{ github.sha }}

${{ env.ECR_REGISTRY }}/service:latest

provenance: true

sbom: true

CI always passes --set image.digest to Helm so Kubernetes pulls by digest, not by the mutable SHA tag.

Tool: Trivy

Scans the freshly pushed ECR image for OS and library vulnerabilities. Blocks on CRITICAL and HIGH. Results uploaded to GitHub Advanced Security as SARIF.

Trigger: merges to main only

Staging always uses a rolling strategy regardless of the service's production strategy. Deployed via Helm with --atomic — if the rollout fails, Helm automatically rolls back to the previous release.

Trigger: merges to main, after staging succeeds

Pauses for manual approval from the platform team via GitHub Environments before proceeding. The production job uses the service's configured strategy (rolling or canary). See Deployment for the canary promotion flow.

AWS Authentication¶

All AWS interactions use GitHub OIDC — no long-lived access keys are stored in GitHub. Three IAM roles are assumed by job type:

| IAM Role | Used By |

|---|---|

github-actions-ecr-push |

Build & Push, Image Scan |

github-actions-eks-deploy-staging |

Deploy Staging |

github-actions-eks-deploy-production |

Deploy Production |

Concurrency Control¶

One deployment per service per ref at a time. A new push to a PR branch cancels the in-flight run for that branch. The production job is protected by the approval gate and cannot be auto-cancelled.

Terraform Pipeline¶

The terraform.yml pipeline manages terraform/environments/production/.

Trigger: any push or PR touching terraform/** or the pipeline file itself.

Stages on Every PR¶

Stages on Merge to Main¶

.tf file in the terraform/ tree is not formatted.

terraform validate checks HCL syntax and provider schema conformance. tflint (v0.53.0) runs the AWS ruleset plugin to catch provider-specific issues (deprecated instance types, missing tags, etc.).

Security and compliance scan across all Terraform modules. Runs in soft-fail mode so the plan comment is always generated even if findings exist. SARIF is uploaded to the Security tab.

Two checks are intentionally suppressed with documented rationale in the code:

- CKV_AWS_126

- CKV2_AWS_5

The plan output is posted as a collapsible PR comment (updated in-place on re-runs). The plan binary, JSON, and text are archived as a 30-day artifact for post-incident reviews.

The apply job re-runs terraform plan immediately before apply to catch any drift that occurred between the PR plan and the approval.

Gated by the production-infrastructure GitHub Environment (requires platform team approval). Uses the apply IAM role (github-actions-terraform-apply) which has broader permissions than the plan role.

Terraform Concurrency¶

cancel-in-progress: false means a second merge queues behind the running apply rather than cancelling it, preventing partial apply and state drift.

State Backend¶

| Setting | Value |

|---|---|

| S3 bucket | appointment-system-tfstate |

| Key | production/terraform.tfstate |

| DynamoDB lock table | terraform-locks |

| Encryption | Enabled |

| Region | eu-west-1 |